Expanding their work

Expanding their work

- February 6, 2014

- UCI cognitive scientists receive NSF grant to expand their hearing research to speech perception

-----

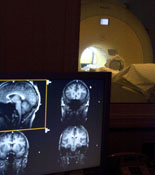

When UCI cognitive scientists used fMRI last year to successfully map a new dimension in the human auditory cortex, they created a new method that allows researchers to more accurately plot where

and how sounds are processed in the human brain. In September, the National Science

Foundation awarded Alyssa Brewer, Gregory Hickok and Kourosh Saberi a four-year, $476,000

grant to further their research on how these maps could tell us more about speech

perception.

When UCI cognitive scientists used fMRI last year to successfully map a new dimension in the human auditory cortex, they created a new method that allows researchers to more accurately plot where

and how sounds are processed in the human brain. In September, the National Science

Foundation awarded Alyssa Brewer, Gregory Hickok and Kourosh Saberi a four-year, $476,000

grant to further their research on how these maps could tell us more about speech

perception.

Similar to the researchers’ previous mapping work, human subjects will run through a series of hearing tests while their brain activity is measured using the 3T fMRI scanner at the UCI Research Imaging Center. The volunteer subjects will be presented with tones, similar to musical notes, ranging from low to high as well as pulsing, rhythmic noises, similar to a “shh” sound, that pulse at different speeds. The research team’s previous study revealed that the auditory cortex puts these two dimensions of sound together and organizes them into orderly maps on the surface of the auditory brain that cover a range of combinations of frequencies and pulsation rates. The new NSF grant will allow the team to fine-tune their measurements and to begin to understand how speech sounds, which contain both pitch and rhythmic information, are processed within this basic organization of auditory cortex.

The research will provide a way to measure how the auditory cortex changes with various hearing disorders such as sensorineural hearing loss and tinnitus, which could lead to the development of better assistive devices.

The study began in September and will run through June 2017.

-----

Would you like to get more involved with the social sciences? Email us at communications@socsci.uci.edu to connect.

Related News Items

- Careet RightCGPACS Year End Reception and Showcase

- Careet RightIntertwined perspectives

- Careet RightGetting grounded

- Careet RightExperts warn Trump is creating a new world order paid for 'in blood'

- Careet RightIs 'financial independence, retire early' bad for your brain? What the science says and how to do it the right way